AI-Powered MSP Knowledge Platform

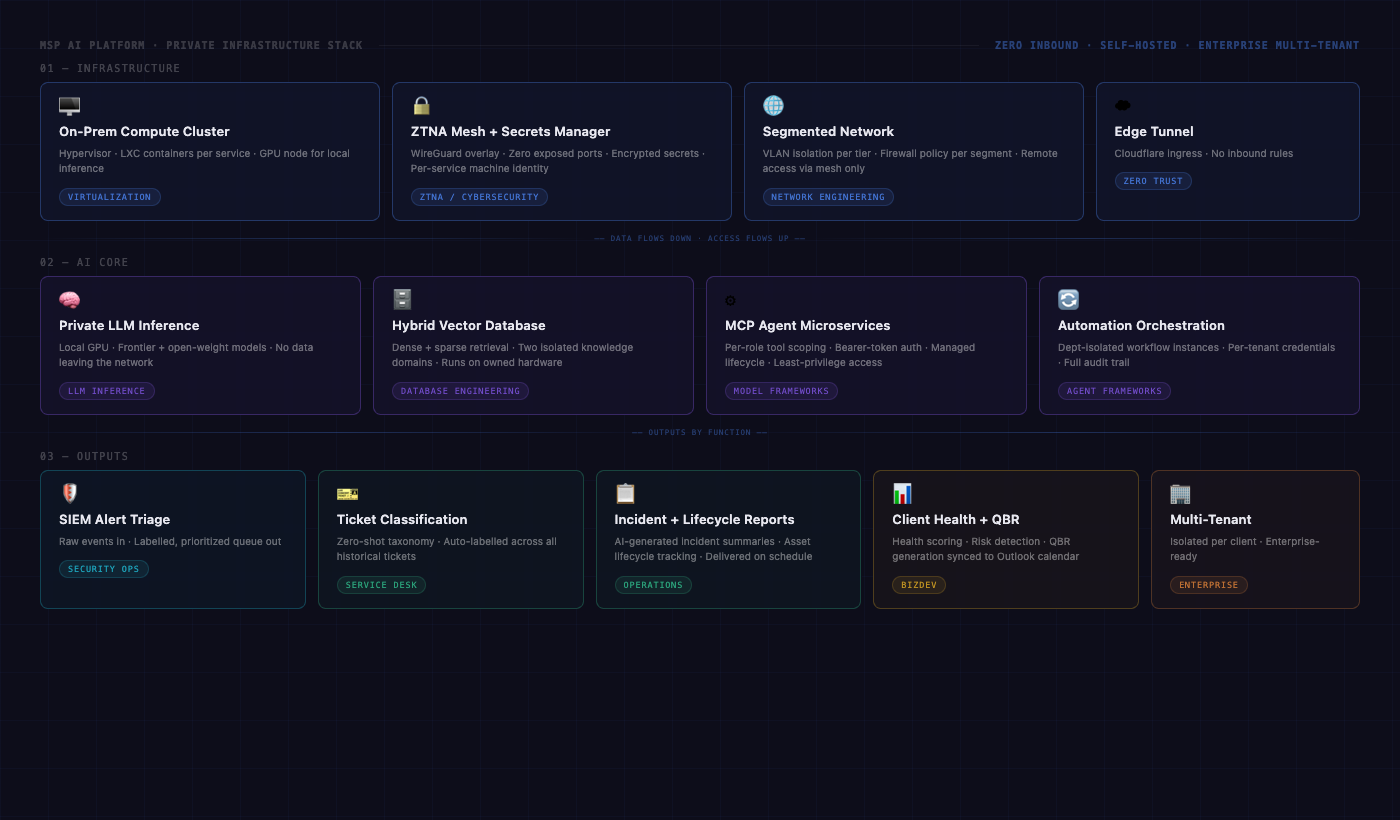

Six acquisitions. Six tech stacks. Six data silos. One private AI layer to connect them, designed, built, and shipped to production in a week, on infrastructure the business owns.

Problem

A managed service provider that had grown through six acquisitions. Six companies, six tech stacks, six data silos, and no shared intelligence layer connecting any of it. The portfolio spanned clients across legal, manufacturing, nonprofit, education, and enterprise, all of whom were about to start asking about AI. The question was not whether to build. It was whether the business would own the stack or rent it from a vendor at a multiple, and whether a single person could actually wire six disconnected environments together in time to matter.

What I built

End-to-end private AI infrastructure, architected and delivered by one person in roughly a week:

- A cluster of self-hosted MCP microservices that give agents scoped, audited access to business tools without exposing credentials

- Two separate knowledge bases (one for developer reference, one for vendor and platform documentation) with retrieval tuned per use case

- Automated BizDev pipeline that enriches, researches, and drafts personalized outreach, with a human approval gate before anything goes out

- AI-driven SIEM alert triage that reads raw security events and posts a labelled, prioritized summary to the operations channel

- Zero-shot classification of historical support tickets on local GPU, giving the business a clean taxonomy of what it actually gets paid to fix

- PII scrubbing and FedRAMP exclusion filters between data ingestion and the model layer, so regulated content never touches the AI pipeline

- Private mesh networking and a self-hosted secrets manager so the whole thing runs without a single hardcoded credential or open inbound port

What I learned

The hard part was not the AI. It was the operating discipline. Vector indexes, agent access control, credential hygiene, network segmentation, and lifecycle management are all unforgiving at production scale. Shipping AI in a week meant I had already solved those problems for twenty years. The AI just rode on top.

The data existed. Six companies worth of it. But data from six separate acquisitions is not a knowledge base, it is a pile. Before the AI could do anything useful, the data had to make sense: cleaned, structured, chunked, embedded, and indexed in a way that retrieval could actually trust. That work is invisible when it is done right and catastrophic when it is skipped. Most people skip it and wonder why their RAG pipeline hallucinates.